So far I’ve mostly used AI systems in search and a little bit for AI-assisted coding in a very basic form. But the world keeps screaming AI and I have seen a number of interesting use cases recently that might be useful to me that have sparked further interest. As the questions and potential use cases kept piling up, I decided to gather them and to make a learning journey out of the exercise. Reflecting and documenting is part of this process, so I decided that a good way to do this is to start ‘a little series’ on this blog. This way, I can remember what I did and learnt later, and at the same time I might give you some ideas and practical tips should you be interested in the topic. That being said, here’s the list of topics I would like to address over the next few weeks:

Basic Questions

How do LLMs work on the inside? I’m looking for a short and high level technical primer, as I would like to understand the basics, being well aware that the maths behind it is not really my cup of tea. But that’s OK, we live in a highly specialized world, and it’s important to understand the basic principles to be able to build on them. I think I do have a good understanding of the basic concepts of the layers between the silicon far down below and high level programming languages like Python and modern server systems. From that point of view, AI and LLMs are yet another layer on top. This learning journey should help me to understand how that latest layer on top works, what its limitations and future potentials are, and how I can benefit from it.

Running LLMs Locally

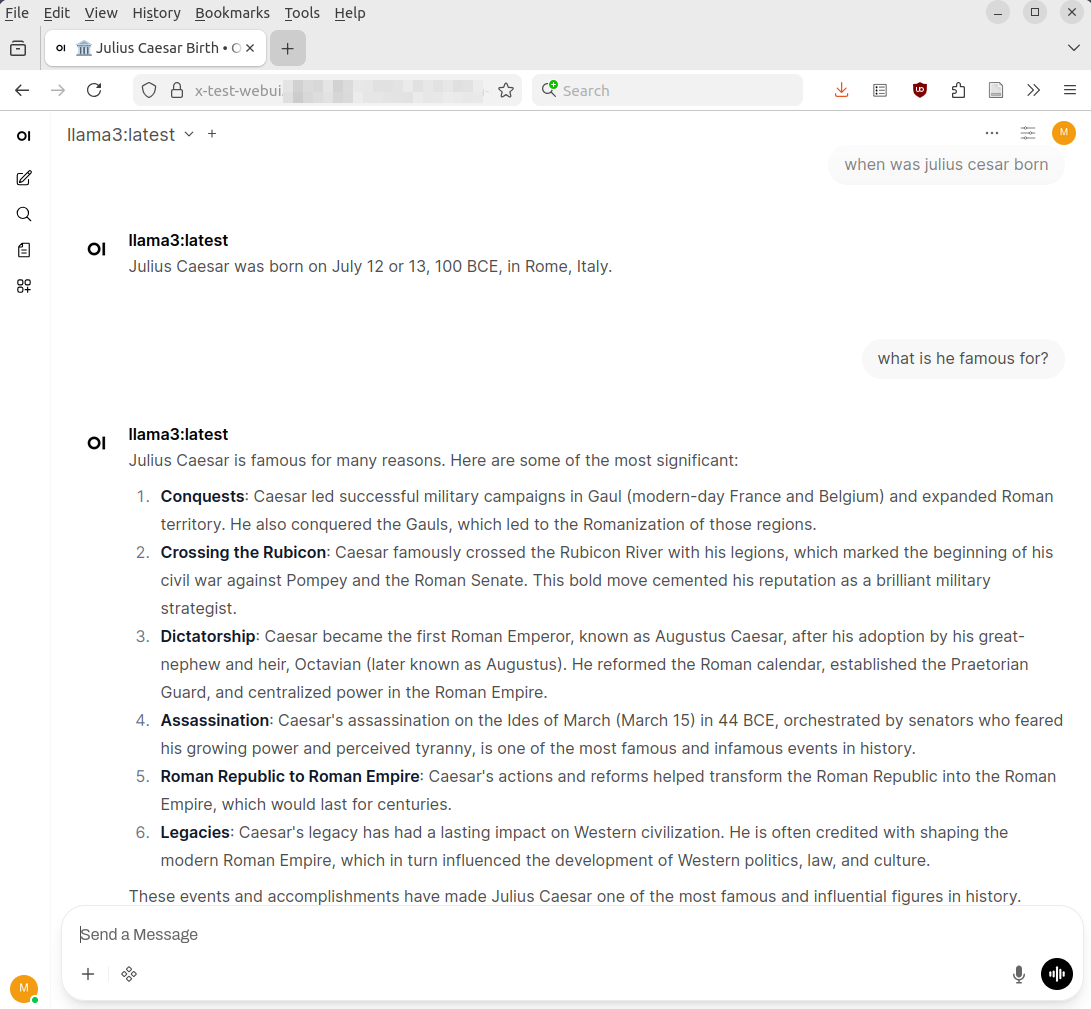

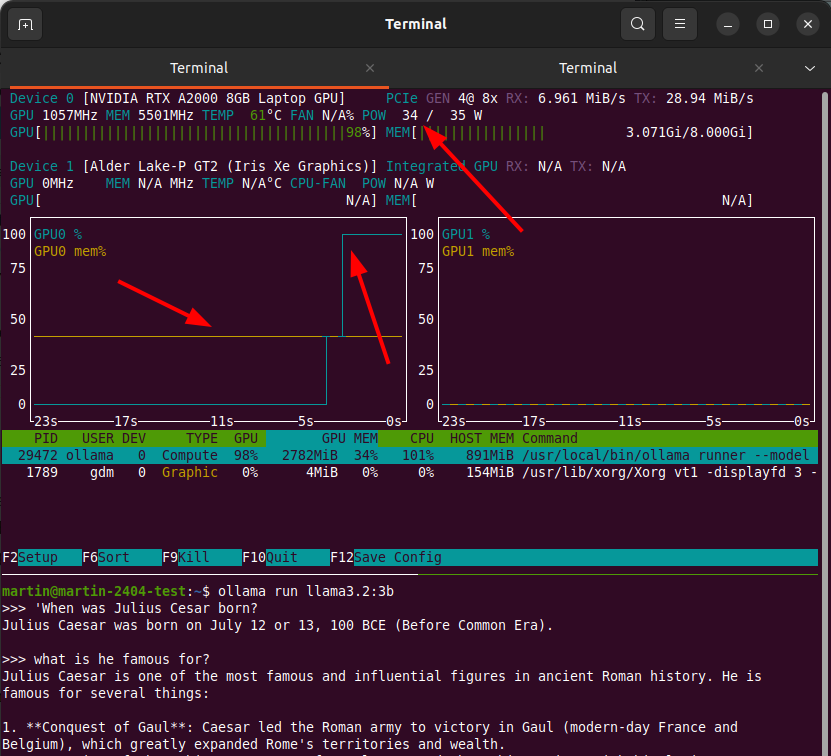

Having stuff run in the cloud outside my control creates two problems for me: First, I have no idea how much hardware and energy is really required to run LLMs. And secondly, I really don’t want my personal data flowing out to all sorts of data centers belonging to all sorts of big tech companies. But it’s possible to run LLMs locally and I want to find out what is required for this and how large or small these local LLMs are compared to what runs in the cloud today that drives ChatGPT, Perplexity and many other systems. Open source technologies like Ollama and Open WebUI are two software projects among many that might help me here. Fortunately, I do have a bit of CPU and GPU power at home and I will put that to good use for this learning journey.

Renting GPU Power

A middle ground between LLMs on premise on the one hand and ‘paying per token’ for LLMs running in big data centers are virtual machines or docker containers one can rent with GPUs attached, on which LLMs can be run (somewhat) privately as well. So would that work for me and what is the cost involved?

LLMs in Open Source Software

I’ve noticed that my self-hosted office suite based on Nextcloud and OnlyOffice can also tie in AI and LLMs for things such as search, translation, help with grammar, letter writing, etc. etc. The obvious questions are: Can I run that with the hardware that I have at home, would it be useful for me, and how does this compare to what is available in proprietary and centralized systems?

AI Assisted Coding

As mentioned at the top of this post, I’m very much interested in AI-Assisted coding, which is different from Vibe Coding. I’ll explain later. Anyway, I have done some fairly basic stuff with this in the past but I’ve noticed that it has now become possible to interact with AI systems in a way that code is generated and changed on the fly based on a conversation with an AI system in the code editor itself. That sounds exciting and I want to try different options if the software that runs on my end remains open source without telemetry. Let’s see if that is possible.

Trying Out Different Systems

I have few illusions that whatever I can run with relatively inexpensive hardware at home is no match for the LLMs and systems of big corp. And for some things like AI assisted programming, using remote LLMs might even work for me, as most of my code ends up as open source on a public git repository anyway. No privacy and secrecy involved here. To see what is possible and useful to me, I’d like to experiment with different systems to solve practical problems I have on my to-do list.

Getting to Grips with 3GPP Specs

Once upon a time, one could read 3GPP specs, understand them and at the same time get an idea of how networks out there really work. That probably ended after 3GPP Release 8. Some would say it was even earlier. The last 10 iterations of the specs of the last two decades have added a lot of stuff that is interesting at best and confusing at worst, but not used anywhere in real networks. These days, you don’t see the forest for the trees anymore in those documents. So could an LLM help me to reduce the pain and let me understand more quickly how some mechanisms that are actually used in real networks are specified across different 3GPP specification documents? So far I haven’t seen anything, but perhaps I haven’t looked hard enough? Let’s see.

Let’s Go!

So long story short, this is not going to be a single weekend project. But it doesn’t have to be, because there are so many interesting questions and possibilities around those topics in my head, so this promises to be an interesting ride.