After looking into local LLMs and Retrieval Augmented Generation (RAG) in the previous post, this post focuses how to experiment and use public LLMs in a privacy friendly way. The basic problem: While one can anonymously ask a limited number of questions to public LLMs such as ChatGPT, Perplexity, etc., more in depth questions require an account and often a monthly subscription. And with an account comes the loss of privacy. But there’s an interesting solution:

LLM routing

I’m not quite sure anymore how I came across it, but there’s an aggregation platform called OpenRouter from which over 350 LLMs can be accessed. Instead of a monthly subscription to LLMs of a particular AI company, OpenRouter gives access to all of them, including the latest ‘foundational and frontier models‘.

Pay per Token

OpenRouter doesn’t have a subscription model but charges per token. The price per token depends on which model is selected for a particular question. Prices range from very cheap to very expensive, relatively speaking. To give you a general idea, I’ve so far spent around 10 euros on tokens via OpenRouter, most of them on somewhat complex projects over many days that I will describe in a follow up post. There’s no risk of unintended overspending, as one can top-up a user-defined amount and then use the credit until it is used up. In my experiments, I topped up in increments of 5 euros and spent the 10 euros over a few days rather than just a few minutes or hours.

LLM Use via API Keys

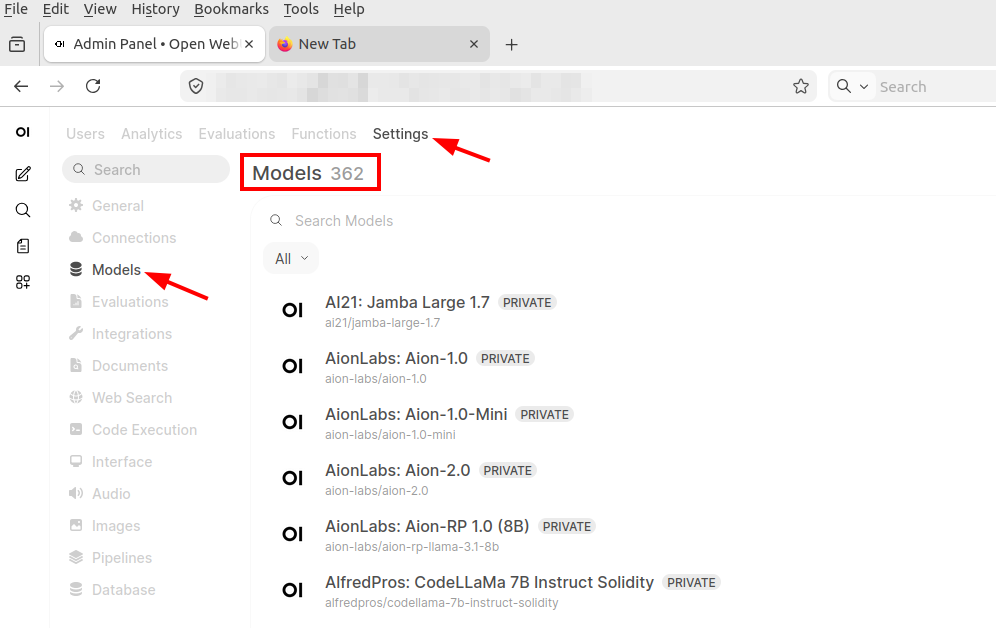

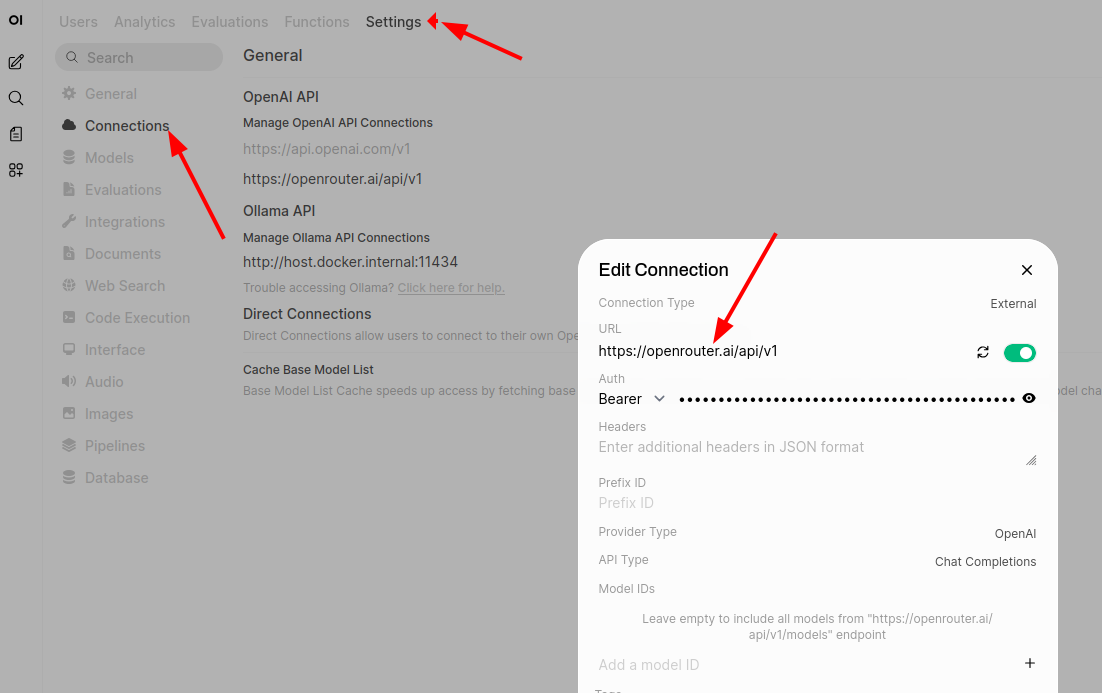

As described above, OpenRouter sees itself as an LLM routing platform. To use any of the LLMs that OpenRouter supports, one generates and OpenRouter API key and uses it with a project, e.g. my local Open WebUI user interface. Once imported, Open WebUI shows all LLM models OpenRouter supports in addition to the locally installed LLMs.

Note: OpenRouter also has a prompt user interface from which any of the supported LLMs can be used, but it doesn’t store chat histories, etc., because that is not what it’s purpose is, i.e. LLM routing. But to try OpenRouter before using it with an API key and a local Open WebUI instance or other project that can query LLMs with an API key, it is quite useful.

Privacy

When I ask a question in Open WebUI, the question is forwarded via my personally identifiable API key to OpenRouter. From there it is forwarded via a non-personal API key to an external LLM. This way, the owner and operator of the LLM gets the data, i.e. the question, but does not know where it comes from. OpenRouter says in its terms and conditions that it does not store questions, answers or histories, which also prevents personal profile generation. So from a privacy point of view I like this setup much better than signing up for a monthly subscription for ChatGPT, Perplexity, etc.

My Open WebUI instance at home, on the other hand, stores all of this, so I can go back to my earlier conversations and review them while all of this information is stored privately. Fantastic!

Still, one has to be cautious not use personal identifiable information during prompting, as one doesn’t have any kind of control where this data is sent to, potentially analyzed, and stored. So, for example, I would definitely not use such a setup to translate a confidential text.

Also, one has to trust OpenRouter not to store anything. The company is based in the US, so there is another thing one has to trust. I faintly remember there is a European based alternative available, but I decided not to further investigate for the moment, as I’m still in the experimentation phase. But it’s on the to do list.

Summary

I very much like the combination of a local Open WebUI instance that stores interactions and private data and OpenRouter that lets me use over 350 LLMs and anonymizes my interactions. One still has to be careful when it comes to privacy and personal data, but it goes way beyond in terms of privacy and anonymity compared to subscription based LLM offers that generate a personal profile.