It is interesting to see how the users of my Nextcloud keep pushing previously existing limits, real or perceived. Back in 2018 I had a post of how to increase the maximum file size Nextcloud could handle and I was happy to see that I could easily go up to 5 GB files. I didn’t push it beyond that as I couldn’t imagine anyone would want to send bigger files. But recently, I was asked if a 20 GB file could be stored on my Nextcloud for sharing and I have a to admit I was skeptical. So I set out to try if and how this would work in practice.

It is interesting to see how the users of my Nextcloud keep pushing previously existing limits, real or perceived. Back in 2018 I had a post of how to increase the maximum file size Nextcloud could handle and I was happy to see that I could easily go up to 5 GB files. I didn’t push it beyond that as I couldn’t imagine anyone would want to send bigger files. But recently, I was asked if a 20 GB file could be stored on my Nextcloud for sharing and I have a to admit I was skeptical. So I set out to try if and how this would work in practice.

One thing I noticed straight away was that in Nextcloud 18 there is no maximum file size setting anymore in the administrator panel. Also, there is no information out there about file size limits that was of any help. All discussions focused on limits for older Nextcloud versions.

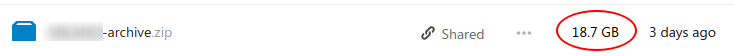

In the end I just assembled that 20 GB zip file and uploaded it over the web interface. In the local network that took around 6 minutes and to my great surprise it actually worked. So I downloaded it again and compared the md5 checksum of the original file I uploaded and the downloaded copy. They matched so it really did work. I played around some more and noticed the upload is actually chunked. In the background, chunks of a hundred MB or so were uploaded one by one. Once all chunks are transmitted, they are assembled together again into one big file. So that’s how Nextcloud gets around PHPs upload file size limit and perhaps also around HTTP timeouts.

Speaking about HTTP timeout limits. Since the file was uploaded for sharing I was wondering if it could be downloaded at a line rate of 40 Mbit/s I have available in the uplink direction of my server. This would take around 1.5 hours. Would the web browser or the server time out in the meantime? Surprisingly, this worked as well, and the md5 checksum turned out to be correct. O.k. so let’s push this, I thought, and I limited the transfer speed to 10 Mbit/s so the download would take around 5 hours. wget and curl with their speed limiting options are your friends. Here’s an example of how to do a ‘slow-speed’ test at a rate of 1 Mbyte/s, i.e. 8 Mbit/s:

curl --limit-rate 1M -C - https://www.my-nextcloud.com/nc/index.php/s/Xjgiue478anoz/download -o test1.zip

Again, somewhat to my surprise, the web server did not time out over 5 hours and I got a 20 GB file that matched the original’s md5 checksum.

The only thing that did not work in all of this was uploading the 20 GB file over a DAVS share in the Nautilus file manager on my PC. The 20 gigabytes are pushed to the server just fine but the file never makes it into the Nextcloud directory. Also, I didn’t see where the incoming data was temporarily stored, it was all a bit strange. But since the web interface worked, I didn’t follow this up.

At the end of the exercise, I was happy to see that 20 GB files are not a problem, if, of course, you have enough storage capacity on the server. Which makes me wonder where the limit is these days.