After the (virtual) Vintage Computing Festival Berlin is before the (virtual) festival and I’m in the process of cutting, upscaling, loudness normalizing and re-encoding the video stream dump into presentations for media.ccc.de. Ffmpeg is a great tool for this and with the right GPU hardware that the process probably flies. But I’m not a gamer and also don’t regularly modify and cut video streams so I don’t have that. As a result, going through all the steps to produce a final video of a presentation takes a Lenovo X250 2-core / 4 thread notebook around one hour for each hour of video material provided. Due to a lack of proper GPUs I thought I’d do the next best thing and just throw more and powerful CPUs at the problem that one can rent in the cloud. A 16 dedicated vCPU virtualized setup for 166 euros a month that can be rented on a per minute basis should do the trick. Or so I thought…

After the (virtual) Vintage Computing Festival Berlin is before the (virtual) festival and I’m in the process of cutting, upscaling, loudness normalizing and re-encoding the video stream dump into presentations for media.ccc.de. Ffmpeg is a great tool for this and with the right GPU hardware that the process probably flies. But I’m not a gamer and also don’t regularly modify and cut video streams so I don’t have that. As a result, going through all the steps to produce a final video of a presentation takes a Lenovo X250 2-core / 4 thread notebook around one hour for each hour of video material provided. Due to a lack of proper GPUs I thought I’d do the next best thing and just throw more and powerful CPUs at the problem that one can rent in the cloud. A 16 dedicated vCPU virtualized setup for 166 euros a month that can be rented on a per minute basis should do the trick. Or so I thought…

So I uploaded around 40 GB of stream dumps to the server in the cloud and my expectation was to see at least a factor of 10 speed improvement in the ffmpeg cut/upscale/loudness/re-encodig process. For one thing, that’s 8 times the number of processors compared to my X250 notebook. Also, those XEON based processors should be much more powerful then the power and heat optimized CPUs in my notebook.

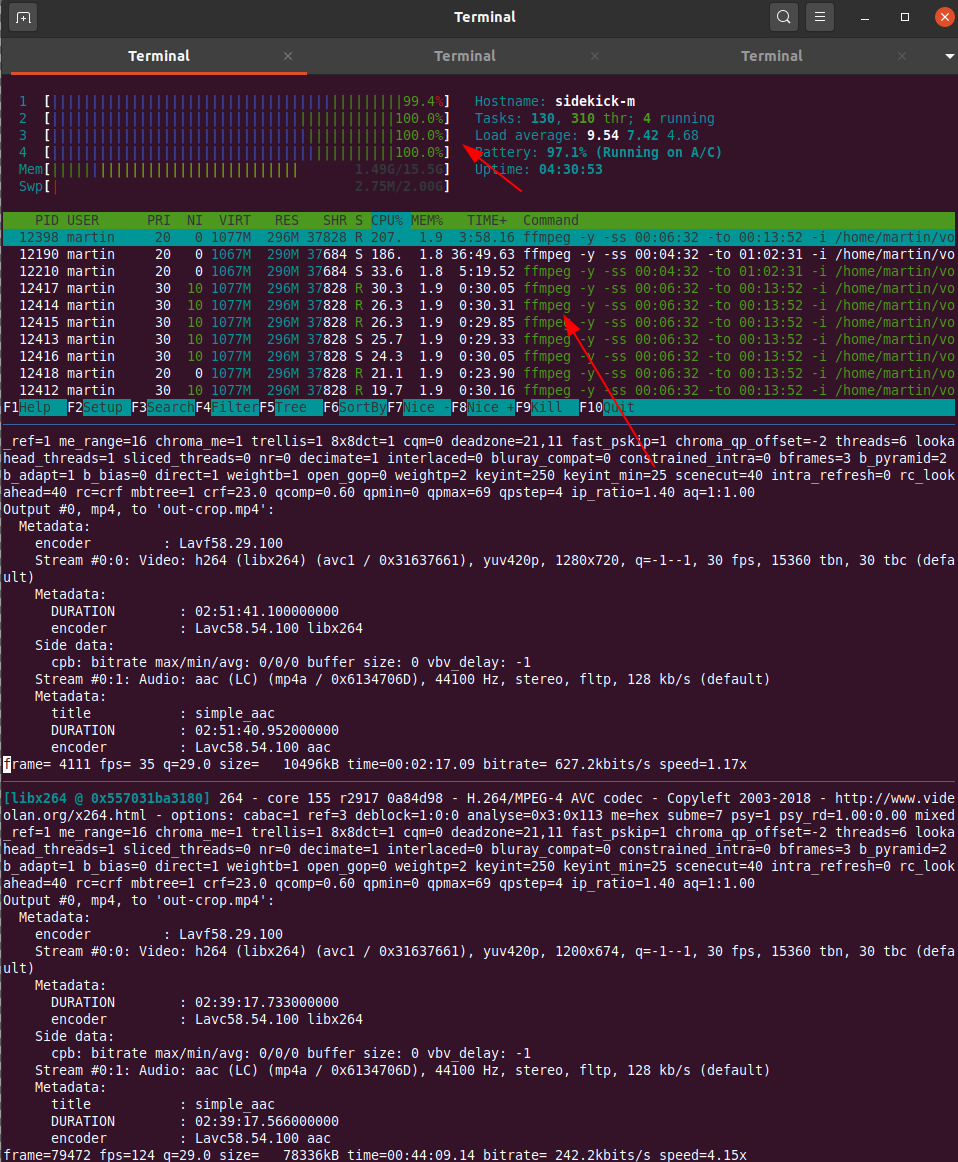

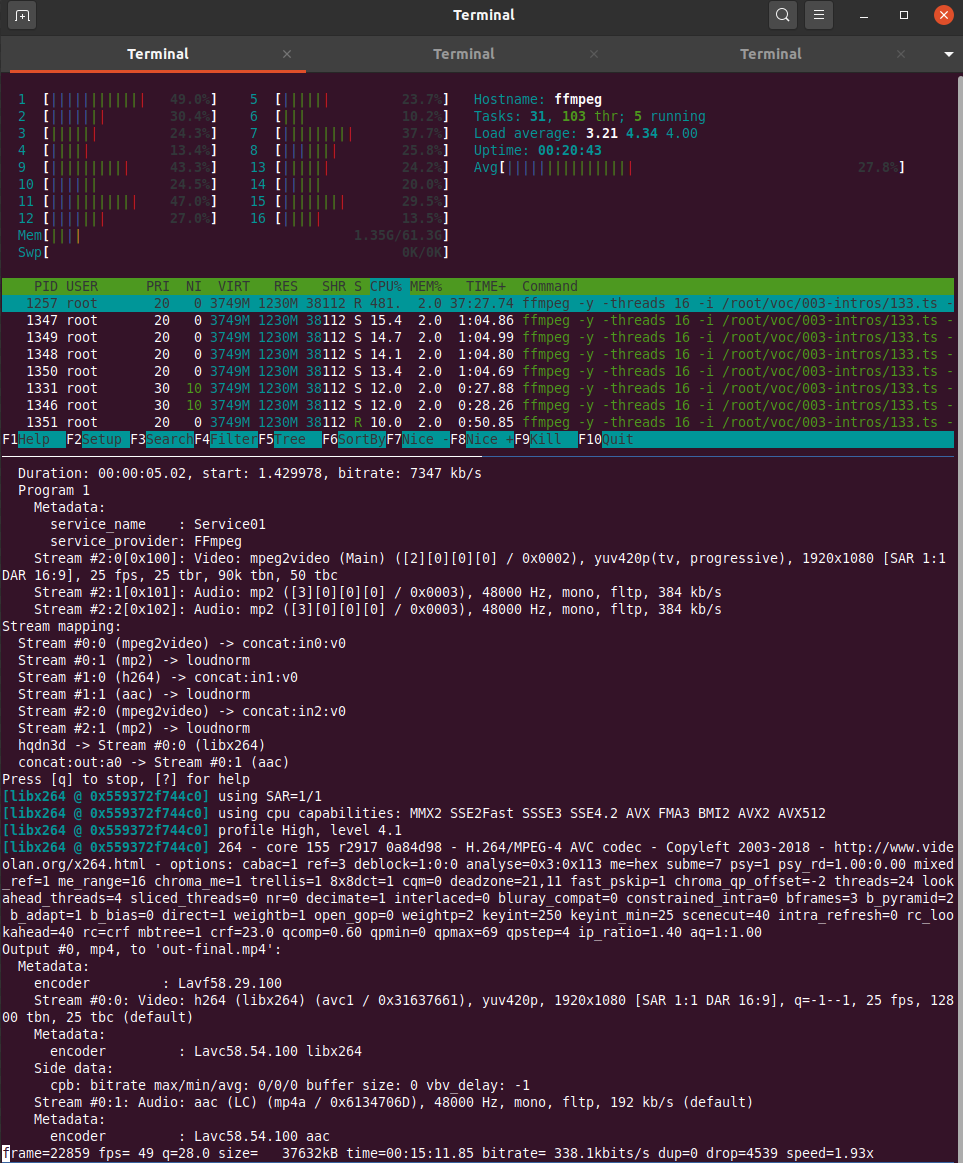

What I got for the effort was rather disappointing however. Instead of the 10x speed improvement, which I thought was a rather conservative expectation, I got a meager factor of two! What takes 1 hour on my notebook took 30 minutes on the 16 core server. It seems that ffmpeg can’t distribute its workload across all 16 cores at once, there seems to be a limit somewhere. While on my notebook the 2 cores with their 4 threads ran at 100% on the same input data stream while the cloud server CPUs ran at a modest 30-60% load. So the comparison would probably look better if I ran several ffmpeg instances simultaneously that work on different presentation videos. But even at that low CPU load I would have still expected a significant speed increase as XEON CPUs should run circles around mobile i5 CPUs from back in 2015.

What I got for the effort was rather disappointing however. Instead of the 10x speed improvement, which I thought was a rather conservative expectation, I got a meager factor of two! What takes 1 hour on my notebook took 30 minutes on the 16 core server. It seems that ffmpeg can’t distribute its workload across all 16 cores at once, there seems to be a limit somewhere. While on my notebook the 2 cores with their 4 threads ran at 100% on the same input data stream while the cloud server CPUs ran at a modest 30-60% load. So the comparison would probably look better if I ran several ffmpeg instances simultaneously that work on different presentation videos. But even at that low CPU load I would have still expected a significant speed increase as XEON CPUs should run circles around mobile i5 CPUs from back in 2015.

It leaves me a bit baffled. I am no ffmpeg expert, perhaps the small difference is because on the notebook ffmpeg can use some hardware for encoding or changing the stream that is not present on the XEON based cloud server. I am aware that ffmpeg can be compiled to support different kinds of hardware, so perhaps that would have helped in addition. But I just wanted to throw money at the problem, not get into the fine details of video encoding. Obviously the wrong approach.

So instead of using that mighty 16 vCPU cloud server I have opted for preparing the ffmpeg commands for each video and have 3 or 4 of them run simultaneously over night. But still, it strikes me as odd.

Last time I check a vCPU on AWS was a single thread on a hyperthreading CPU. So 16vCPUs would be a CPU with 8 real cores and 16 threads. Also Xeons with many cores often have a lower clock speed (compared with a quadcore desktop cpu). A c5.4xlarge (16vCPU) on AWS runs at 3.4GHz. What did you use exactly?

How fast (clock) is your notebook?

What does libx264 say about your CPU capabilities (the middle of the screen of your server screenshot “using CPU capabilities”).

You could also check if you can use the hardware acceleration in your CPU for doing recoding, Intel QuickSync is quite nice these days (given that you have a fairly recent processor).

Just found your blog, great stuff! I’ve used your Conversations app as well.