I recently bought a new printer and I’m currently looking a bit into how printers are detected and how page data is sent to the printer. Why? Just out of interest and to understand how modern printing works over the local network. I have three HP manufactured printers at home, but I would also like to understand the type of document formats printers of other manufacturers understand. So if you are running Linux and have a printer in the local network (not connected via USB), would you mind to look up the document format capabilities of your printer and let me know? On the shell this is quite easy:

# Detect all printers in the local network

#

$ ippfind -l

ipp://YYYYYYYYYY:631/ipp/print idle accepting-jobs none

# YYYYYYYYY is the IP address or domain name of the printer

#

$ ipptool -tv ipp://YYYYYYYYYYYY:631/ipp/print get-printer-attributes.test | grep "document-format\|printer-make-and-model"

The ipptool command then returns the model name and document formats supported. I’d be very grateful if you could post the result in the comments below. Many thanks in advance!

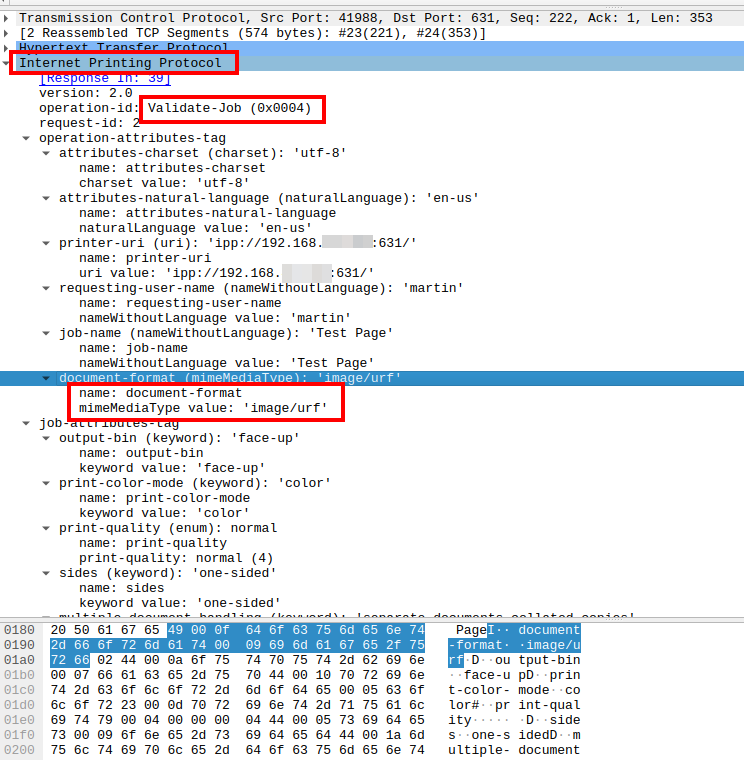

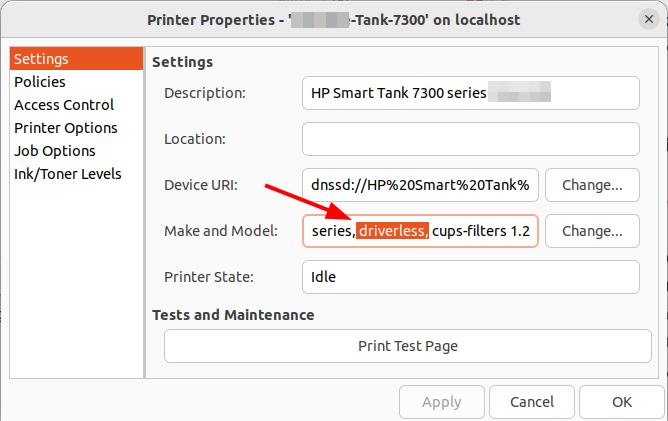

And to give you an idea how this looks like, here’s the output returned by my new printer:

printer-make-and-model (textWithoutLanguage) = HP Smart Tank 7300 series

document-format-default (mimeMediaType) = application/octet-stream

document-format-supported (1setOf mimeMediaType) = application/vnd.hp-PCL,image/jpeg,image/urf,image/pwg-raster,application/PCLm,application/octet-stream

document-format-version-supported (1setOf textWithoutLanguage) = PCL3GUI,PCL3,PJL,Automatic,JPEG,AppleRaster,PWGRaster,PCLM

document-format-details-default (collection) = {document-format=application/octet-stream}

document-format-varying-attributes (1setOf keyword) = copies,hp-color-working-spaces-supported